Home

Esther Sun

About Me

I am a Master’s student at Carnegie Mellon University (Class of 2026), where I focus on Multimodal Machine Learning. I am privileged to be supervised by Prof. Carlos Busso. My academic journey began at the University of Toronto, where I earned an Honors Bachelor of Science with a double major in Computer Science and Statistical Science. During my undergraduate years, I also spent time as an exchange student at Tsinghua University’s Institute for Interdisciplinary Information Sciences (IIIS/Yao Class).

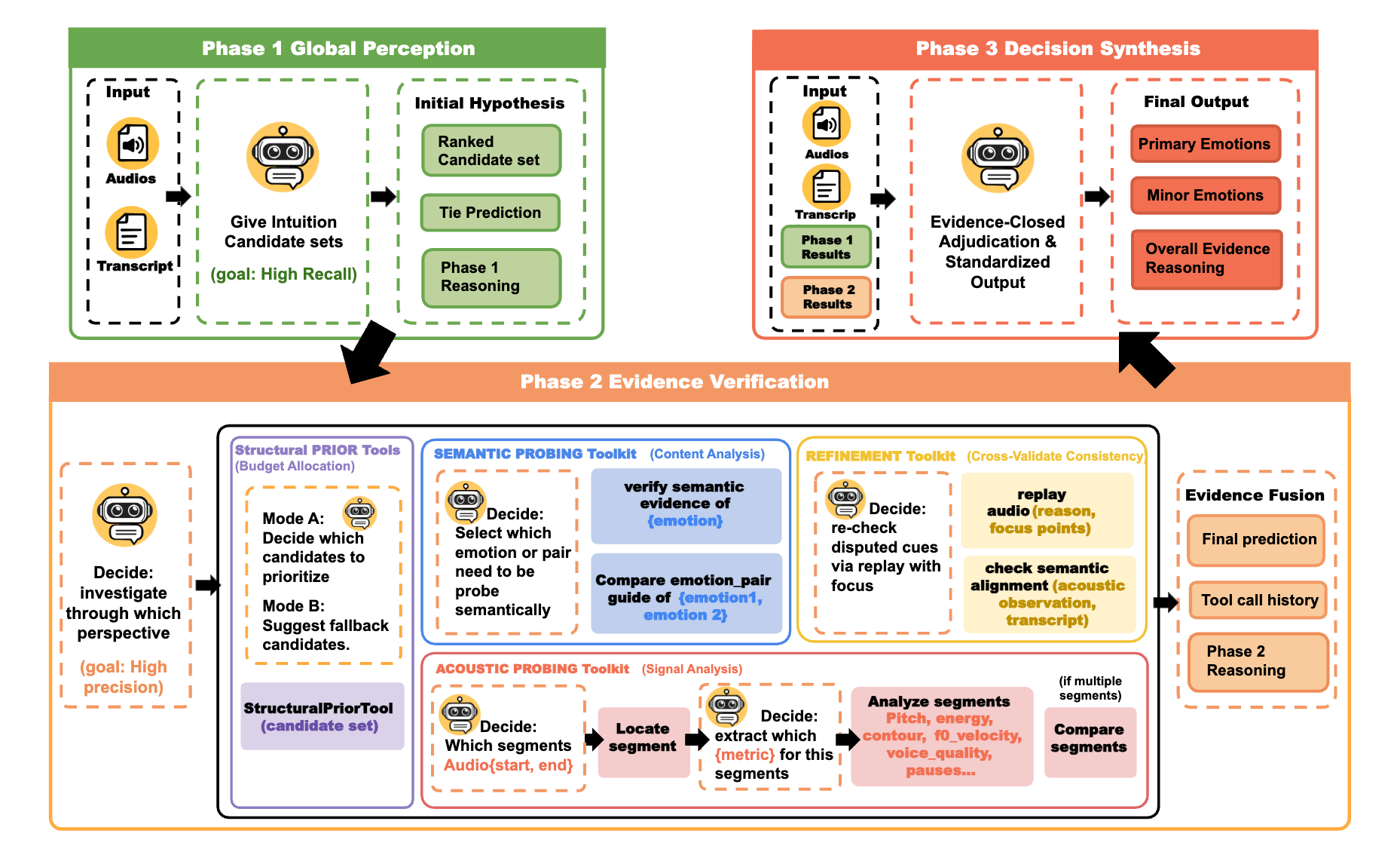

My research trajectory has evolved from early work in multimodal CV–NLP systems — particularly explainable image forgery detection and multimodal fact verification — toward a current specialization in Affective Computing and Emotion Intelligence, focusing on multimodal emotion recognition and AI persona. My recent work centers on understanding emotional ambiguity in real-world human communication, such as ADEPT (Agentic Decoding of Emotion via Probing Tools), which combines multimodal large language models with reinforcement learning (GRPO) to generate evidence-grounded rationales for ambiguous emotional states. I believe the future of AI lies not just in providing the “right” answer, but in communicating with emotional intelligence and context-aware empathy—ensuring that agents respond in the most appropriate and human-centric manner.

Education

Carnegie Mellon University

Master Student | 📍 Pittsburgh, USA

Focus: Multimodal Machine Learning

University of Toronto

Honors B.Sc. | 📍 Toronto, Canada

Double Major: Computer Science & Statistical Science

Tsinghua University

Exchange Student | IIIS (Yao Class) | 📍 Beijing, China

Publications

First Author | 🔗 Paper

Agentic LLM reasoning · Tool-augmented inference · RL alignment (GRPO)

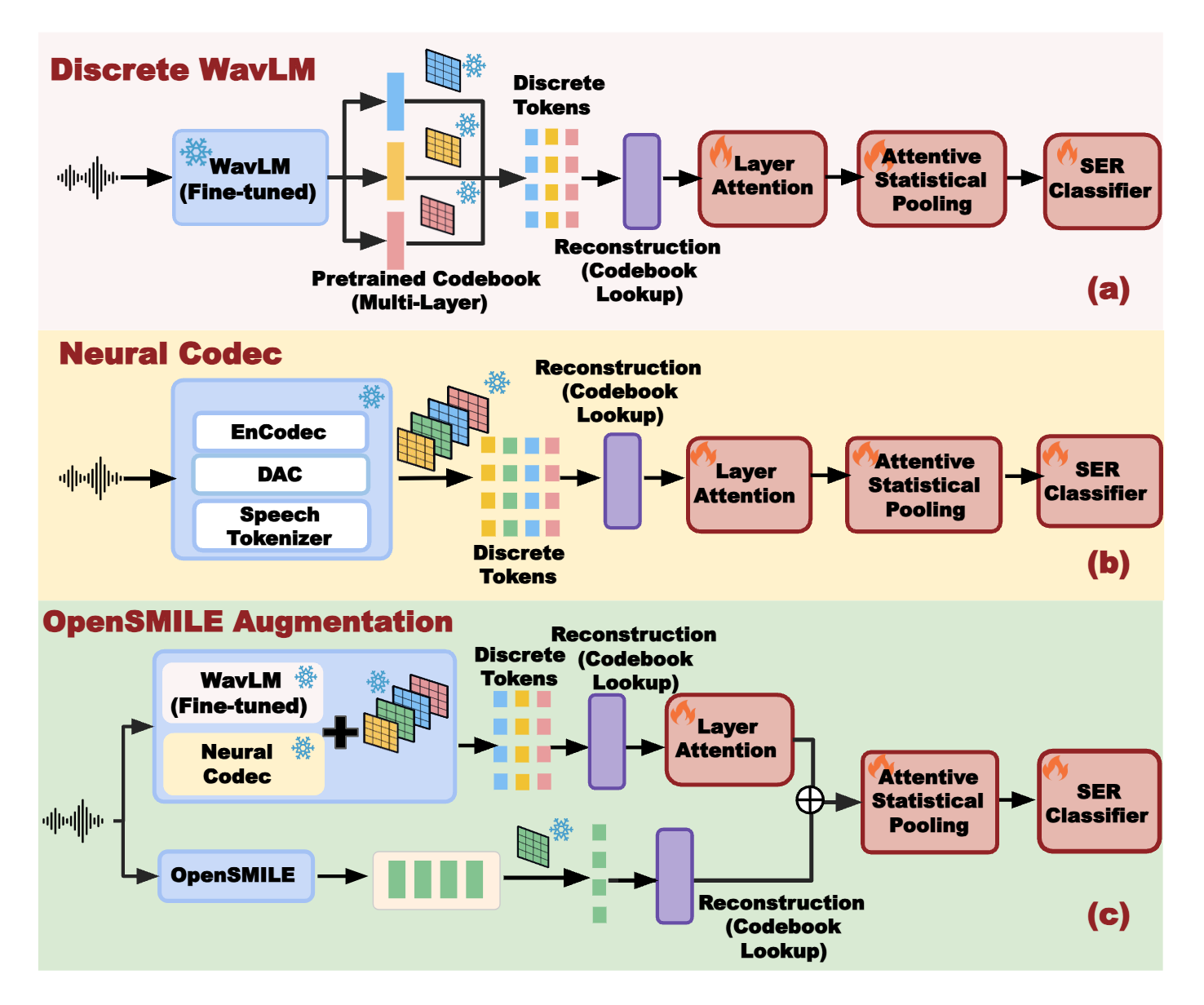

First Author | 🔗 Paper

Discrete Audio Tokenization · Multi-layer Attention Fusion · SSL · Neural Codecs

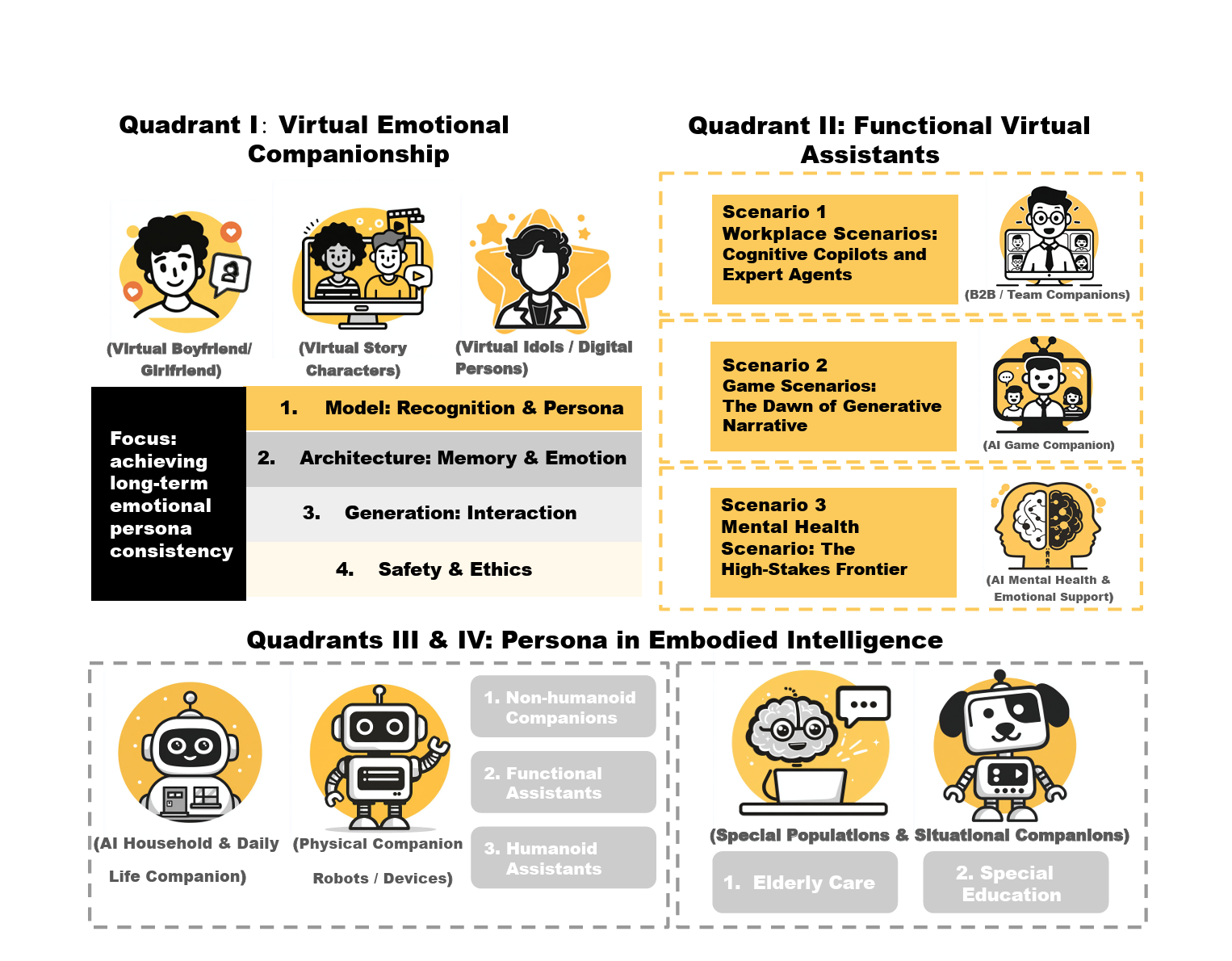

Systematizing LLM Persona Design: A Four-Quadrant Technical Taxonomy for AI Companion Applications

First Author | 🔗 Paper

AI Companionship · Embodied Intelligence · Technical Taxonomy

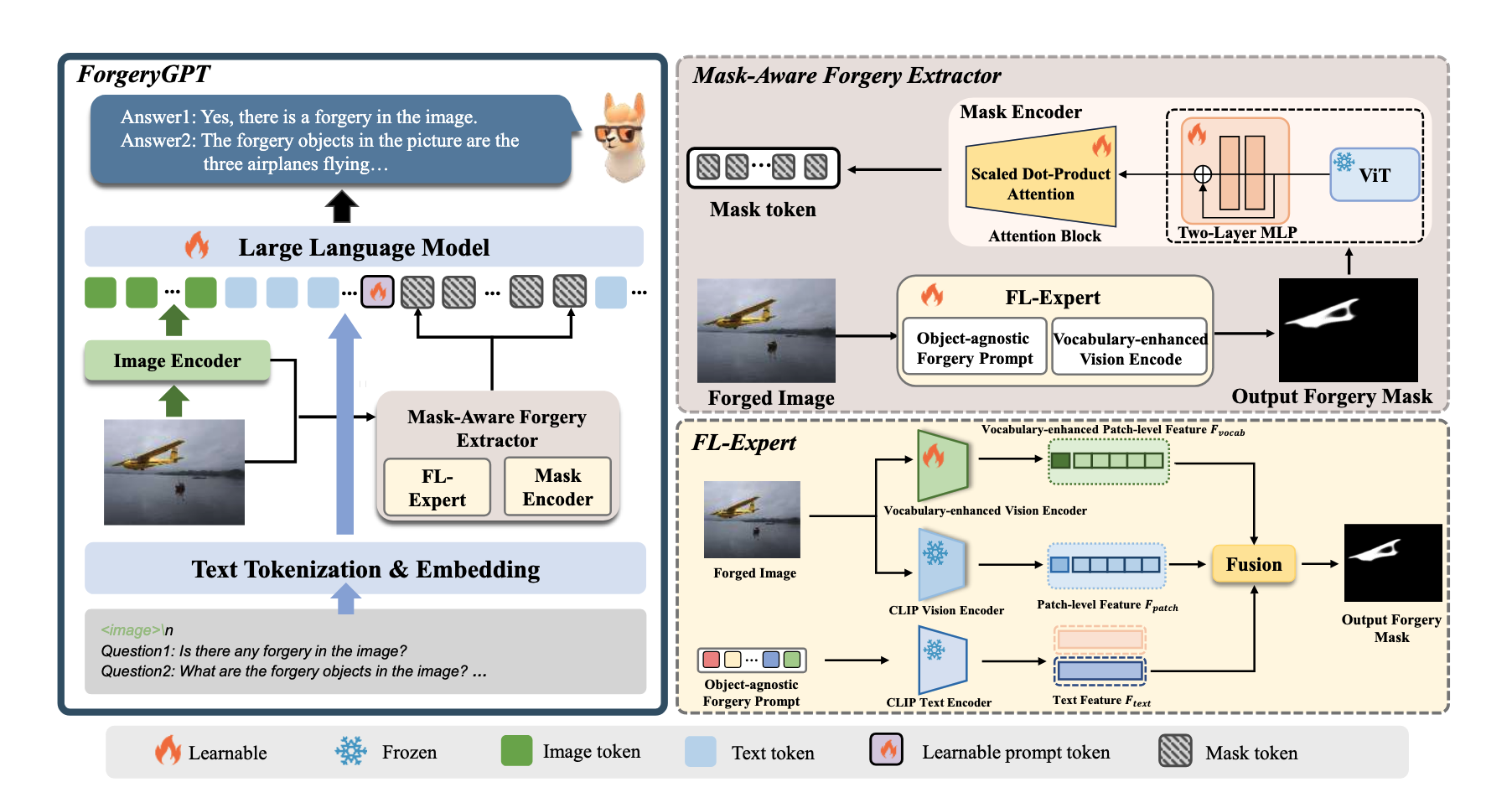

ForgeryGPT: Multimodal Large Language Model for Explainable Image Forgery Detection and Localization

Co-author | 🔗 Paper

Fine-grained forgery localization · Vision–language reasoning · Multimodal LLM grounding

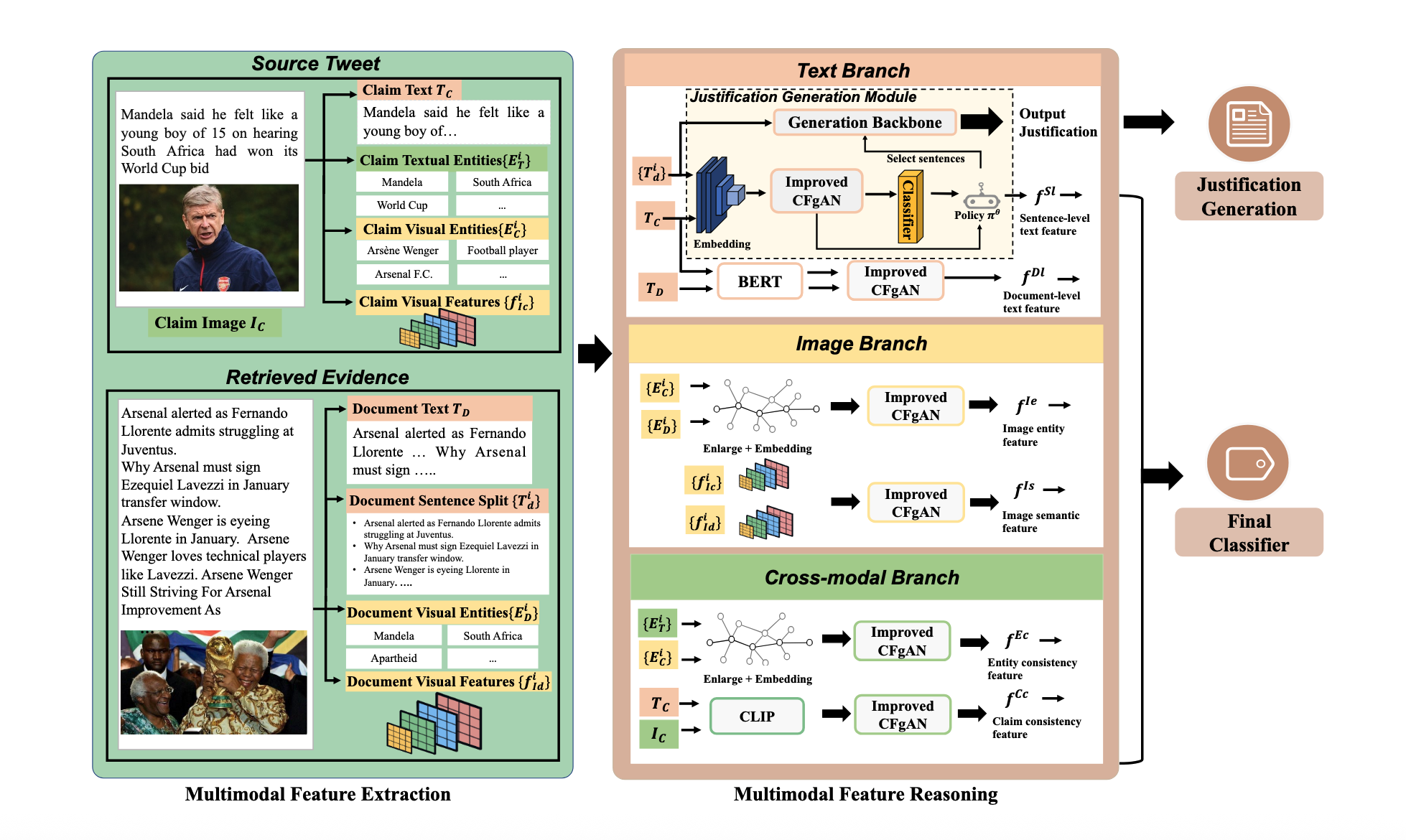

ECENet: Explainable and Context-Enhanced Network for Multimodal Fact Verification

Co-author | 🔗 Paper

Dual-granularity Attention · Cross-modal Alignment · Hierarchical Reasoning

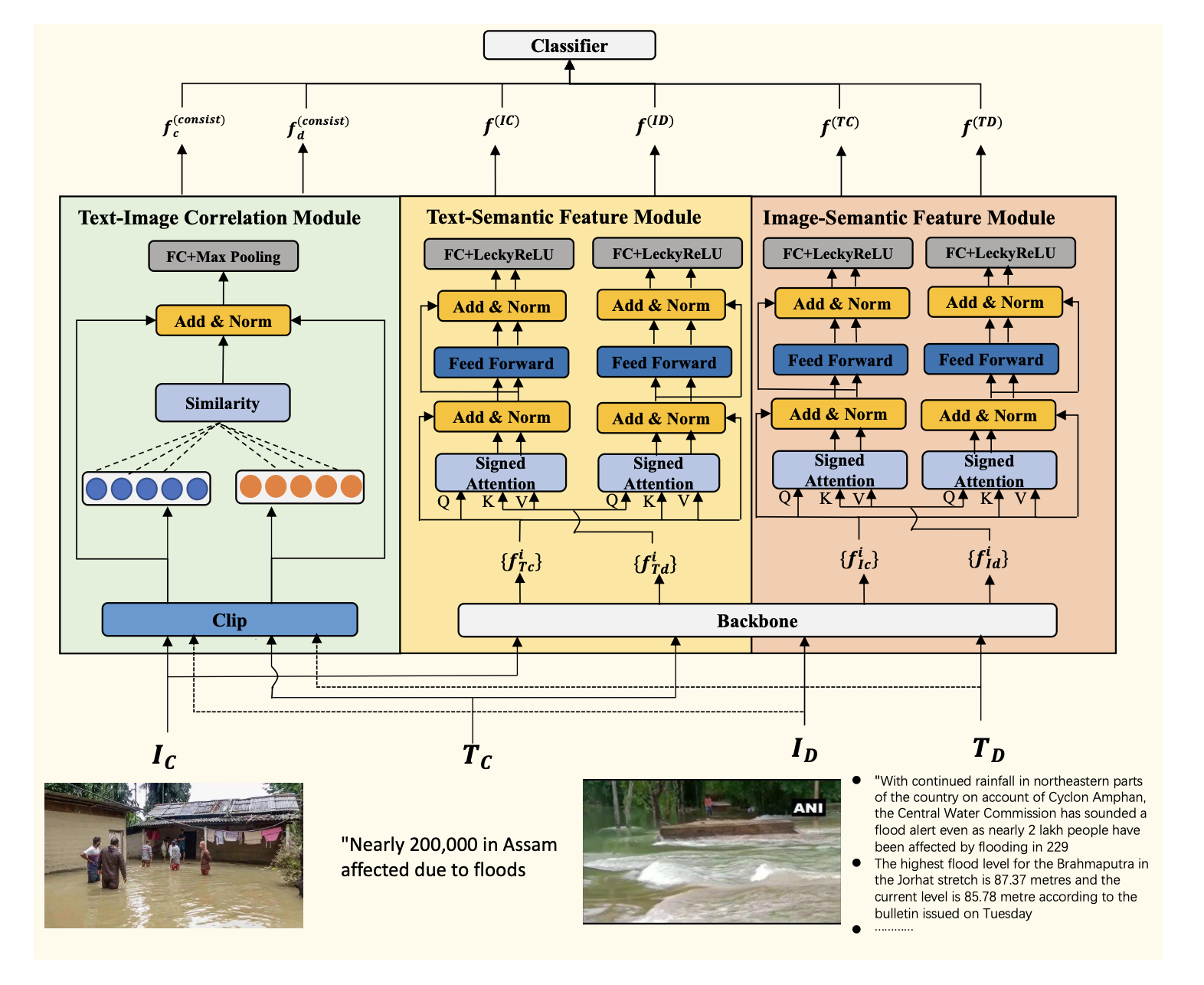

Unimodal Feature-Enhanced and Cross-Modal Correlation Learning for Multimodal Fact Verification

Co-author | 🔗 Paper

Multimodal feature engineering · Cross-modal correlation learning

Professional Experience

Data Scientist Intern — Moneris

📍 Toronto, Canada · May 2022 – Sep 2023 (16-month)

Machine Learning Systems · Imbalanced Learning · Feature Engineering · Production ML Pipelines

Art Studio Assistant

Summer 2019

Illustration & Visual Storytelling · Visual Design · Color Composition